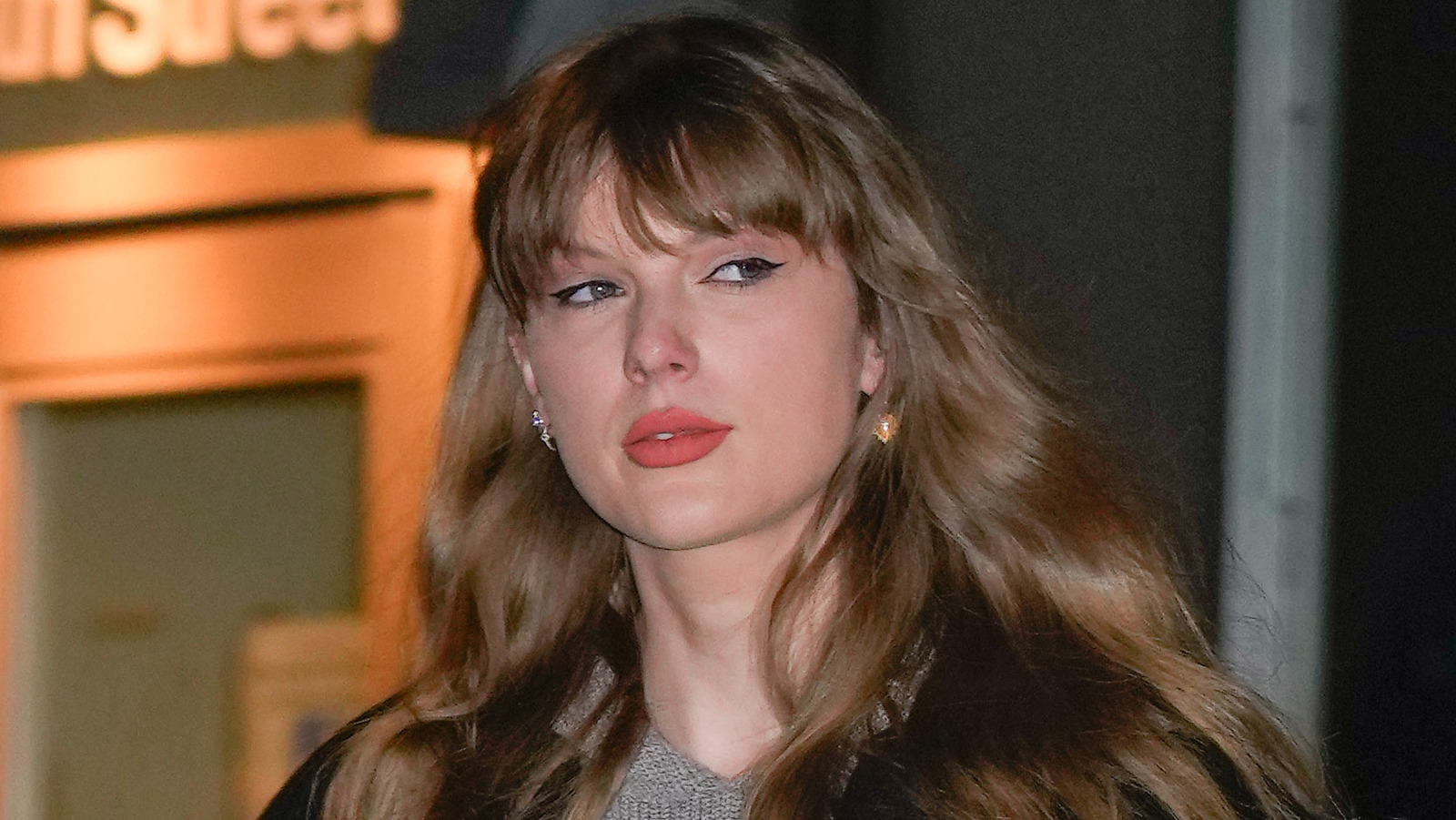

A number of social networks were briefly home to the AI-generated Swift images, including X (formerly Twitter), Facebook, and Reddit, according to CNN. Though all three companies have removed the content, critics argue that a more proactive approach is needed to address this problem going forward, particularly now that generative AI is readily available to the average person. Many websites and online communities exist that dabble in the creation and dissemination of this kind of explicit content, and while some states and countries have laws in place that make doing so illegal, others haven’t moved as fast as the evolution of the technology itself.

Swift isn’t the first celebrity to fall victim to the technology, but her popularity means the issue has been brought to the public’s attention in a big way, and that may help spur the movement of legislation that addresses the problem — that’s all but guaranteed if, as claimed by Daily Mail, Swift’s alleged contemplation about taking legal action results in an actual lawsuit or two. Meanwhile, though it is, for all intents and purposes, impossible to prevent the creation of this kind of explicit material due to the existence of offline AI generators, companies may soon leverage AI-powered proactive scanning technology to detect these images before they’re made publicly available — similar technology is already used to detect CSAM.